Difference between revisions of "HFI data compression"

(→Impact of the data compression on science) |

|||

| Line 99: | Line 99: | ||

===Impact of the data compression on science=== | ===Impact of the data compression on science=== | ||

The effect of a pure quantization process of step <math>Q</math> (in units of <math>\sigma</math>) on the statistical moments of | The effect of a pure quantization process of step <math>Q</math> (in units of <math>\sigma</math>) on the statistical moments of | ||

| − | a signal is well known | + | a signal is well known{{BibCite|widrow}} |

When the step is typically below the noise level (which is largely the PLANCK | When the step is typically below the noise level (which is largely the PLANCK | ||

case) one can apply the Quantization Theorem which states that the | case) one can apply the Quantization Theorem which states that the | ||

| Line 110: | Line 110: | ||

complicated and depends on the signal and noise details. As a rule of | complicated and depends on the signal and noise details. As a rule of | ||

thumb, a pure quantization adds some auto-correlation function that is | thumb, a pure quantization adds some auto-correlation function that is | ||

| − | suppressed by a <math>\exp[-4\pi^2(\frac{\sigma}{Q})^2]</math> factor {{BibCite|banta}}. | + | suppressed by a <math>\exp[-4\pi^2(\frac{\sigma}{Q})^2]</math> factor{{BibCite|banta}}. |

Note however that PLANCK does not perform a pure quantization | Note however that PLANCK does not perform a pure quantization | ||

process. A baseline which | process. A baseline which | ||

Revision as of 16:06, 22 July 2014

Contents

Data compression[edit]

Data compression scheme[edit]

The output of the readout electronics unit (REU) consists of one value for each of the 72 science channels (bolometers and thermometers) for each modulation half-period. This number, , is the sum of the 40 16-bit ADC signal values measured within the given half-period. The data processor unit (DPU) performs a lossy quantization of .

We define a compression slice of 254 values, corresponding to about 1.4 s of observation for each detector and to a strip on the sky about 8 degrees long. The mean of the data within each compression slice is computed, and data are demodulated using this mean:

where is the running index within the compression slice.

The mean of the demodulated data is computed and subtracted, and the resulting slice data is quantized according to a step size Q that is fixed per detector:

This is the lossy part of the algorithm: the required compression factor, obtained through the tuning of the quantization step Q, adds a noise of variance to the data. This will be discussed below.

The two means and are computed as 32-bit words and sent through the telemetry, together with the values. Variable-length encoding of the values is performed on board, and the inverse decoding is applied on ground.

Performance of the data compression during the mission[edit]

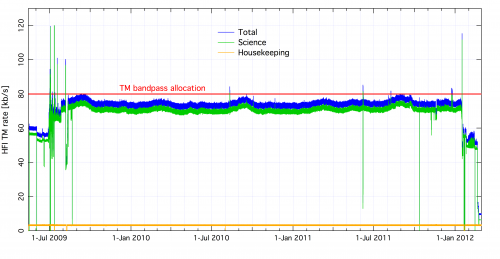

Optimal use of the bandpass available for the downlink was obtained initially by using a value of Q = /2.5 for all bolometer signals. After the 12th of December 2009, and only for the 857 GHz detectors, the value was reset to Q = /2.0 to avoid data loss due to exceeding the limit of the downlink rate. With these settings the load during the mission never exceeded the allowed band-pass width as is seen on the next figure.

Setting the quantization step in flight[edit]

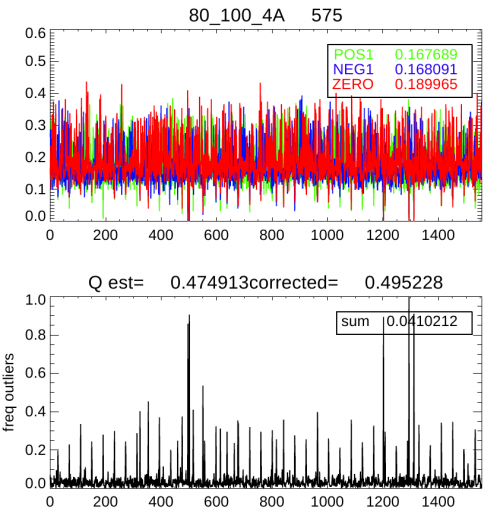

The only parameter that enters the PLANCK-HFI compression algorithm is the size of the quantization step, in units of , the white noise standard deviation for each channel. It has been adjusted during the mission by studying the mean frequency of the central quantization bin [-Q/2,Q/2], within each compression slice (254 samples). For a pure Gaussian noise, this frequency is related to the step size (in units of ) by where the approximation is valid up to . In PLANCK however the channel signal is not a pure Gaussian, since glitches and the periodic crossing of the Galactic plane add some strong outliers to the distribution. By using the frequency of these outliers, , above , simulations show that the following formula gives a valid estimate:

The following figure shows an example of the and timelines that were used to monitor and adjust the quantization setting.

Impact of the data compression on science[edit]

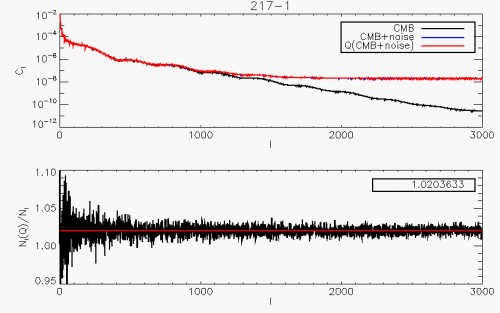

The effect of a pure quantization process of step (in units of ) on the statistical moments of a signal is well known[1] When the step is typically below the noise level (which is largely the PLANCK case) one can apply the Quantization Theorem which states that the process is equivalent to the addition of a uniform random noise in the range. The net effect of quantization is therefore to add quadratically to the signal a variance. For this corresponds to a noise level increase. The spectral effect of the non-linear quantization process is theoretically much more complicated and depends on the signal and noise details. As a rule of thumb, a pure quantization adds some auto-correlation function that is suppressed by a factor[2]. Note however that PLANCK does not perform a pure quantization process. A baseline which depends on the data (mean of each compression slice value), is subtracted. Furthermore, for the science data, circles on the sky are coadded. Coaddition is again performed when projecting the rings onto the sky (map-making). To study the full effect of the PLANCK-HFI data compression algorithm on our main science products, we have simulated a realistic data timeline corresponding to the observation of a pure CMB sky. The compressed/decompressed signal was then back-projected onto the sky using the PLANCK scanning strategy. The two maps were analyzed using the \texttt{anafast} Healpix procedure and both reconstructed were compared. The result is shown for a quantization step .

It is remarkable that the full procedure of

baseline-subtraction+quantization+ring-making+map-making still leads to the increase of the

variance that is predicted by the simple timeline quantization (for ).

Furthermore we check that the noise added by the compression algorithm is white.

It is not expected that the compression brings any non-gaussianity, since the pure quantization process does not add any skewness and less than 0.001 kurtosis, and coaddition of circles and then rings erases any non-gaussian contribution according to the Central Limit Theorem.

- ↑ A Study of Rough Amplitude Quantization by Means of Nyquist Sampling Theory, B Widrow, IRE Transactions on Circuit Theory, CT-3(4), 266-276, (1956).

- ↑ On the autocorrelation function of quantized signal plus noise, E. Banta, Information Theory, IEEE Transactions on, 11, 114 - 117, (1965).

Readout Electronic Unit

analog to digital converter

Data Processing Unit

(Planck) High Frequency Instrument

Cosmic Microwave background