HFI-Validation

The HFI validation is mostly modular. That is, each part of the pipeline, be it timeline processing, map-making, or any other, validates the results of its work at each step of the processing. In addition, we do additional validation with an eye towards overall system integrity. These are described below.

Contents

Expected systematics and tests (bottom-up approach)[edit]

Like all experiments, Planck/HFI had a number of "issues" which it needed to track and verify were not compromising the data. While these are discussed in appropriate sections, here we gather them together to give brief summaries of the issues and refer the reader to the appropriate section for more details.

- Cosmic Rays - Unprotected by the atmosphere and more sensitive than previous bolometric experiment, HFI was subjected to many more cosmic ray hits than previous experiments. These were detected, the worst parts of the data flagged as unusable, and "tails" were modeled and removed. This is described in XXXXX

- Elephants - Cosmic rays also hit the 100 mK stage and cause the temperature to vary, inducing small temperature and thus noise variations in the detectors. This is described in XXXXX

- 1.6 K Stage Fluctuations

- 4 K Stage Fluctuations

- Popcorn Noise - Some channels were occasionally affected by what seems to be a "split-level" noise, which has been variously called popcorn noise or random telegraphic signal. These data are usually flagged. This is described in XXXXX

- Jumps - Similar to but distinct from popcorn noise, small jumps were occasionally found in the data streams. These data are usually corrected. This is described in XXXXX.

- 4 K Cooler-Induced EM Noise - The 4 K cooler induced noise in the detectors with very specific frequency signatures, which is filtered. This is described in XXXXX.

- 4 K Cooler-Induced Microphonics - The mechanical cooler was shown in XXXXX to cause very little microphonic parasites in the detector data.

- Pointing-Change Microphonics - The changes in pointing after each pointing period were shown in XXXXX to cause very little microphonic parasitic signal in the detector data.

- Compression - Onboard compression is used to overcome our telemetry bandwidth limitations. This is explained in XXXXX.

- Noise Correlations - Correlations in noise between detectors seems to be negligble but for two polarization sensitive detectors in the same horn. This is discussed in XXXXX.

- Electrical Cross-Talk - Cross-talk is discussed in XXXXX.

- Pointing - The final pointing reconstruction for Planck is near the arcsecond level. This is discussed in XXXXX.

- Focal Plane Geometry - The relative positions of different horns in the focal plane is reconstructed using planets. This is discussed in XXXXX.

- Main Beam - The main beams for HFI are discussed in XXXXX.

- Ruze Envelope - Random imperfections or dust on the mirrors can increase the size of the beam a bit. This is discussed in XXXXX.

- Dimpling - The mirror support structure causes a pattern of small imperfections in the beams, which cause small sidelobe responses outside the main beam. This is discussed in XXXXX.

- Far Sidelobes - Small amounts of light can sometimes hit the detectors from just above the primary or secondary mirrors, or even from reflecting off the baffles. While small, when the Galactic center is in the right position, this can be detected in the highest frequency channels, so this is removed from the data. This is discussed in XXXXX.

- Planet Fluxes - Comparing the known fluxes of planets with the calibration on the CMB dipole is a useful check of calibration. This is done in XXXXX.

- Point Source Fluxes - As with planet fluxes, we also compare fluxes of known, bright point sources with the CMB dipole calibration. This is done in XXXXX.

- Time Constants - The HFI bolometers do not react instantaneously to light; there are small time constants, discussed XXXXX.

- ADC Correction - The HFI Analog-to-Digital Converters are not perfect, and are not used perfectly. Their effects on the calibration are discussed in XXXXX.

- Gain changes with Temperature Changes

- Optical Cross-Talk - This is discussed in XXXXX.

- Bandpass - The transmission curves, or "bandpass" has shown up in a number of places. This is discussed in XXXXX and YYYYY.

- Saturation - While this is mostly an issue only for Jupiter observations, it should be remembered that the HFI detectors cannot observe arbitrarily bright objects. This is discussed in XXXXX.

Generic approach to systematics[edit]

While we track and try to limit the individual effects listed above, and we do not believe there are other large effects which might compromise the data, we test this using a suite of general difference tests. As an example, the first and second years of Planck observations used almost exactly the same scanning pattern (they differed by one arc-minute at the Ecliptic plane). By differencing them, the fixed sky signal is almost completely removed, and we are left with only time variable signals, such as any gain variations and, of course, the statistical noise.

In addition, while Planck scans the sky twice a year, during the first six months (or survey) and the second six months (the second survey), the orientations of the scans and optics are actually different. Thus, by forming a difference between these two surveys, in addition to similar sensitivity to the time-variable signals seen in the yearly test, the survey difference also tests our understanding and sensitivity to scan-dependent noise such as time constant and beam asymmetries.

These tests use the Yardstick simulations below and culminate in the "Probabilities to Exceed" tests just after.

HFI simulations[edit]

The 'Yardstick' simulations allows gauging various effects to see whether they need be included in monte-carlo to describe data. It also allows gauging the significance of validation tests on data (e.g. can null test can be described by the model?). They are completed by dedicated 'Desire' simulations (Desire stands for DEtector SImulated REsponse), as well as Monte-Carlo simulations of the Beams determination to determine their uncertainty.

Yardstick simulations[edit]

The Yardstick V3.0 characterizes the DX9 data which is the basis of the data release. It goes through the following steps:

- The input maps are computed using the Planck Sky Model, taking the RIMO bandpasses as input.

- The LevelS is used to project input maps on timeline using the RIMO(B-Spline) scanning beam and the DX9 pointing (called ptcor6). The real pointing is affected by the aberration that is corrected by map-making. The Yardstick does not simulate aberration. Finally, the difference between the projected pointing from simulation and from DX9 is equal to the aberration.

- The simulated noise timelines, that are added to the projected signal, have the same spectrum (low and high frequency) than the DX9 noise. For theyardstick V3.0 Althoough detectable, no correlation in time or between detectors have been simulated.

- The simulation map making step use the DX9 sample flags.

- For the low frequencies (100, 143, 217, 353), the yardstick output are calibrated using the same mechanism (e.g. dipole fitting) than DX9. This calibration step is not perfromed for higher frequency (545, 857) which use a differnt principle

- The Official map making is run on those timelines using the same parameters than for real data.

A yardstick production is composed of

- all survey map (1,2 and nominal),

- all detector Detsets (from individual detectors to full channel maps).

The Yardstick V3.0 is based on 5 noise iterations for each map realization.

NB1: the Yardstick product is also the validating set for other implementations which are not using the HFI DPC production codes, an exemple of which are the so-called FFP simulations, where FFP stands for Full Focal Plane and are done in common by HFI & LFI. This is further described in HL-sims

NB2: A dedicated version has been used for Monte-Carlo simulations of the beams determination, or MCB. See Pointing&Beams#Simulations_and_errors

Desire simulations[edit]

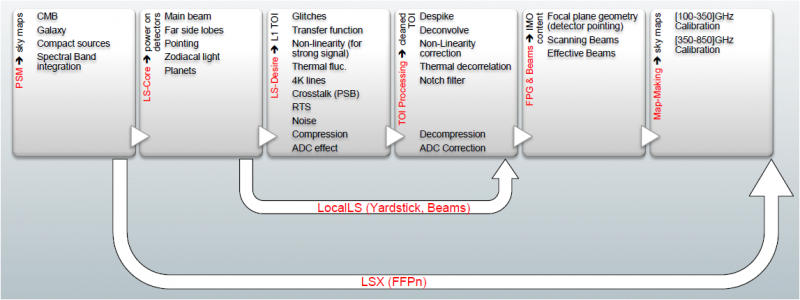

Complementary to the Yardstick simulations, the Desire simulations are used in conjunction with the actual TOI processing, in order to investigate the impact of some systematics. The Desire pipeline allows to simulate the response of the HFI-instrument, including the non-linearity of the bolometers, the time transfer-function of the readout electronic chain, the conversion from power of the sky to ADU signal and the compression of the science data. It also includes various components of the noise like the glitches, the white and colored noise, the one-over-f noise and the RTS noise. Associated to the Planck Sky Model and LevelS tools, the Desire pipeline allows to perform extremely realistic simulations, compatible with the format of the output Planck HFI-data, including Science and House Keeping data. It goes through the following steps (see Fig. Desire End-to-End Simulations) :

- The input maps are computed using the Planck Sky Model, taking the RIMO bandpasses as input;

- The LevelS is used to project input maps into Time ordered Inputs TOIs, as described for the Yardstick simulations;

- The TOIs of the simulated sky are injected into the Desire pipeline to produce TOIs in ADU, after adding instrument systematics and noise components;

- The official TOI processing is applied on simulated data as done on real Planck-HFI TOIs;

- The official map-making is run on those processed timelines using the same parameters as for real data;

This Desire simulation pipeline allows to explore systematics such as 4K lines or Glitches residual after correction by the official TOI processing, as described below.

Simulations versus data[edit]

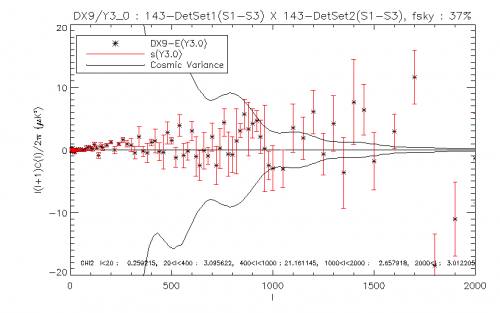

The significance of various difference tests perfromed on data can be assessed in particular by comparing them with Yardstick realisations.

Yardstick production contains sky (generated with LevelS starting from PSM V1.77) and noise timeline realisations proceeded with the official map making. DX9 production was regenerated with the same code in order to get rid of possible differences that might appear for not running the official pipeline in the same conditions.

We compare statistical properties of the cross spectra of null test maps for the 100, 143, 217, 353 GHz channels. Null test maps can either be survey null test or half focal plane null test, each of which having a specific goal :

- survey1-survey2 (S1-S2) aim at isolating transfer function or pointing issues, while

- half focal plane null tests enable to focus on beam issues.

Comparing cross spectra we isolate systematic effects from the noise, and we can check whether they are properly simulated or need to. Spectra are computed with spice masking either DX9 point sources or simulated point sources, and masking the galactic plane with several mask width, the sky fraction from which spectra are computed are around 30%, 60% and 80%.

DX9 and the Y3.0 realisations are binned. For each bin we compute the statistical parameters (mean and variance) of the Yardstick distribution. The following figure is a typical example of a consistency test, it shows the differences between Y3.0 mean and DX9 considering the standard deviation of the yardstick. We also indicate chi square values, which are computed within larger bin : [0,20], [20,400], [400,1000][1000,2000], [2000, 3000], using the ratio between (DX9-Y3.0 mean)2 and Y3.0 variance within each bin. This binned chi-square is only indicative: it may not be always significant, since DX9 variations sometimes disappear as we average them in a bin, the mean is then at the same scale as the yardstick one.

Here will be a linlk to a (big) pdf file with all those plots, and/or a visualisation page.

Systematics Impact Estimates[edit]

Cosmic Rays[edit]

We have used Desire simulations to investigate the impact of glitch residuals at 143GHz. We remind that TOIs are highly affected by the impact of cosmic rays inducing glitches on the timelines. While the peak of the glitch signal is flagged and removed from the data, the glitch tail is removed from the signal during the TOI processing. We have quantified the efficiency and the impact of the official TOI processing on the scientific signal.

1.6K and 4K stages Fluctuations[edit]

RTS Noise[edit]

Split-Level Noise[edit]

Cross-Talk[edit]

Time Transfer Function Uncertainty[edit]

4K lines Residuals[edit]

Saturation[edit]

The Planck-HFI signal is converted into digital signal (Raw-Signal) by a 16 bit ADC. This signal is expressed in ADU, from 1 to 65535, and centered around 32768. A full saturation of the ADC corresponds to the value of 1310680 ADU, corresponding to the number of samples per half period times, N_sample, times 2^15. Nevertheless, the saturation of the ADC starts to appear when a fraction of the raw signal hits the 32767 (2^15) value.

We have used the SEB tool (standing for Simulation of Electronics and Bolometer) to simulate the response of the Readout Electronics Chain at a very high sampling, to mimic the high frequency behavior of the chain and investigate sub-period effects. It has been shown by this kind of simulations that the saturation of the ADC starts to appear if the signal is more then 7*10^5 - 8*10^5 ADU. Hence the variation of the gain, due to the saturation of the ADC, has an impact only when crossing Jupiter for SWB353GHz and SWB857GHz bolometers. This effect can be neglected.

(Planck) High Frequency Instrument

Cosmic Microwave background

[LFI meaning]: absolute calibration refers to the 0th order calibration for each channel, 1 single number, while the relative calibration refers to the component of the calibration that varies pointing period by pointing period.

analog to digital converter

reduced IMO

Data Processing Center

(Planck) Low Frequency Instrument

random telegraphic signal

EMI/EMC influence of the 4K cooler mechanical motion on the bolometer readout electronics.

Planck Sky Model